Chat3D: Interactive understanding 3D scene-level point clouds by chatting with foundation model for urban ecological construction

Chat3D architecture consisting of Translator and Generator modules

Chat3D architecture consisting of Translator and Generator modulesHighlights

- Chat3D: A comprehensive solution that utilizes semantic segmentation, distribution, geographic location, and other information derived from three-dimensional point cloud geographic features as prompts for LLMs.

- Multi-level Prompt Engineering: Utilization of various levels of prompts (coverage, layout/orientation, external geographical knowledge) obtained from 3D point cloud environment perception algorithms.

- Accurate Ecological Assessment: Experimental results show that Chat3D can accurately calculate the local eco-environmental index (EI). Specifically, based on Gemini’s calculation, the EI is 82.5, representing an error of only 2.7 from the officially published result (EI = 85.8).

- Urban Planning Support: The generated reports on urban ecological construction can assess the probability of urban ecological risks and evaluate the rationality of the city’s functional structure and adjustment programs.

Methodology

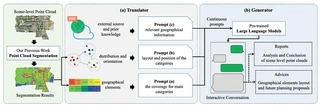

The Chat3D architecture comprises two main components:

Translator: Converts scene-level point cloud semantic segmentation results into textual prompts recognizable by LLMs:

- Prompt (a): Coverage percentages for main categories (vegetation, buildings, water, etc.)

- Prompt (b): Geographical distribution and spatial orientation of categories

- Prompt (c): External geographical information and prior knowledge (climate, hydrology, etc.)

Generator: Employs pre-trained LLMs (ChatGPT, GPT-4, Gemini) to:

- Calculate ecological environment indices (EI, VI, RI, LI, BI)

- Generate comprehensive ecological analysis reports

- Provide layout optimization suggestions for sustainable urban development

Experimental Results

Experiments were conducted on the SYSU9 dataset (Sun Yat-sen University Zhuhai Campus, ~200 million points, 3.571 km²). The study area was segmented into 10 categories including trees, grassland, buildings, vehicles, roads, etc.

Ecological Index Calculation Comparison:

| Model | Environment Index | Error |

|---|---|---|

| ChatGPT | 62.7 | -23.1 |

| New Bing (GPT-4) | 93.0 | +7.2 |

| Gemini | 82.5 | -3.3 |

| Ground Truth | 85.8 | - |

Citation

@article{chen2024chat3d,

title={Chat3D: Interactive understanding 3D scene-level point clouds by chatting with foundation model for urban ecological construction},

author={Chen, Yiping and Zhang, Shuai and Han, Ting and Du, Yumeng and Zhang, Wuming and Li, Jonathan},

journal={ISPRS Journal of Photogrammetry and Remote Sensing},

volume={212},

pages={181--192},

year={2024},

publisher={Elsevier},

doi={10.1016/j.isprsjprs.2024.04.024}

}

I am currently a Ph.D. candidate at the Ai4City-Lab, Urban Governance and Design Thrust, Society Hub, The Hong Kong University of Science and Technology (Guangzhou), under the supervision of Prof. Wufan Zhao and Prof. Yuan Liu. Prior to this, I obtained my Master’s degree from the School of Geospatial Engineering and Science, Sun Yat-sen University, where I was advised by Prof. Wuming Zhang and Prof. Yiping Chen.

My research focuses on 3D visual perception, intelligent interpretation and processing of point cloud data, and multi-modal urban foundation models. I am particularly interested in bridging geometric understanding with semantic reasoning in large-scale urban environments, with an emphasis on open-vocabulary learning, training-free paradigms, and cross-modal fusion between 2D and 3D data.

My goal is to develop scalable, interpretable, and generalizable AI systems for urban analysis, enabling applications such as digital twin construction, urban scene understanding, and intelligent infrastructure management.